Designing

AI Quality Systems

A Four-Part Framework for

AI-Generated Commerce Video

December, 2025 · Kenneth Hung

A four-part case study using TikTok Shop as test case for a generative AI video quality framework: Floor (binary safety gate), Ceiling (numeric optimization target), Style (categorical contextual fit).

Part 1 introduces the framework

Part 2 scales it across eight verticals and three markets

Part 3 applies it to cross-regional adaptation (one product, three markets)

Part 4 applies it to authenticity (making AI-generated content feel human).

The work surfaces what AI Behavior Design actually means as a practice: defining category-specific safety thresholds, calibrating quality benchmarks against top performers, codifying style match across format-aesthetic-category combinations, and authoring the rubrics that turn human judgment into machine-learnable signals.

The result is both a working framework portable across AI commerce platforms, and an operational portrait of an emerging design discipline that sits between policy work and ML engineering.

Part 1: Floor / Ceiling / Style for AI-Generated Commerce Video

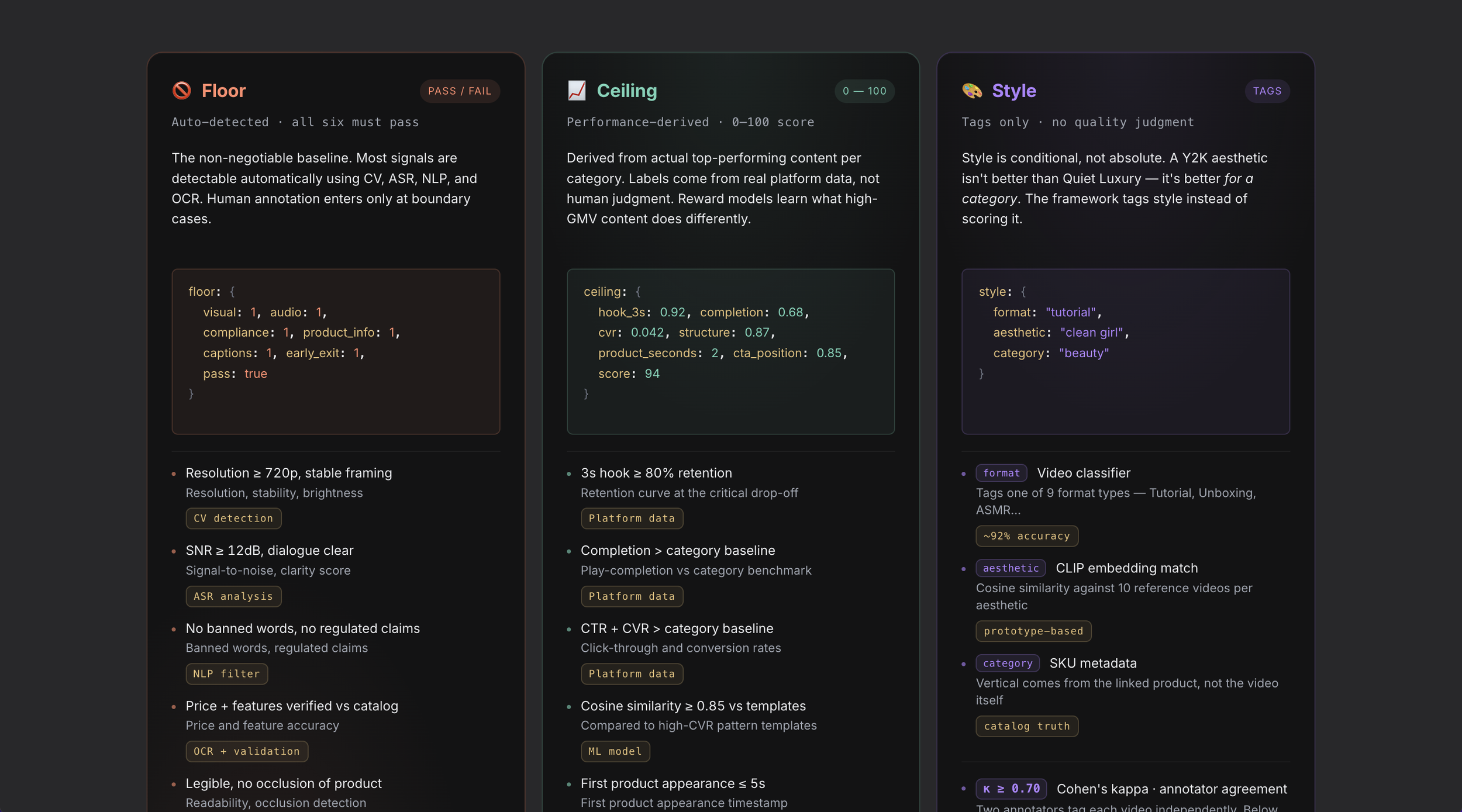

Introduces a three-layer quality framework for AI-generated commerce video: Floor as a binary safety gate, Ceiling as a numeric optimization target benchmarked against top performers, Style as categorical match across format, aesthetic, and category.

Covers how each layer maps to a distinct annotation method, how the framework turns human judgment into machine-learnable signals, and where bias enters when quality systems codify behavior without asking who that behavior serves.

The result is both a quality specification and a responsible AI structure, grounded in how Anthropic, OpenAI, and Google DeepMind encode AI behavior using similar architectural shapes.

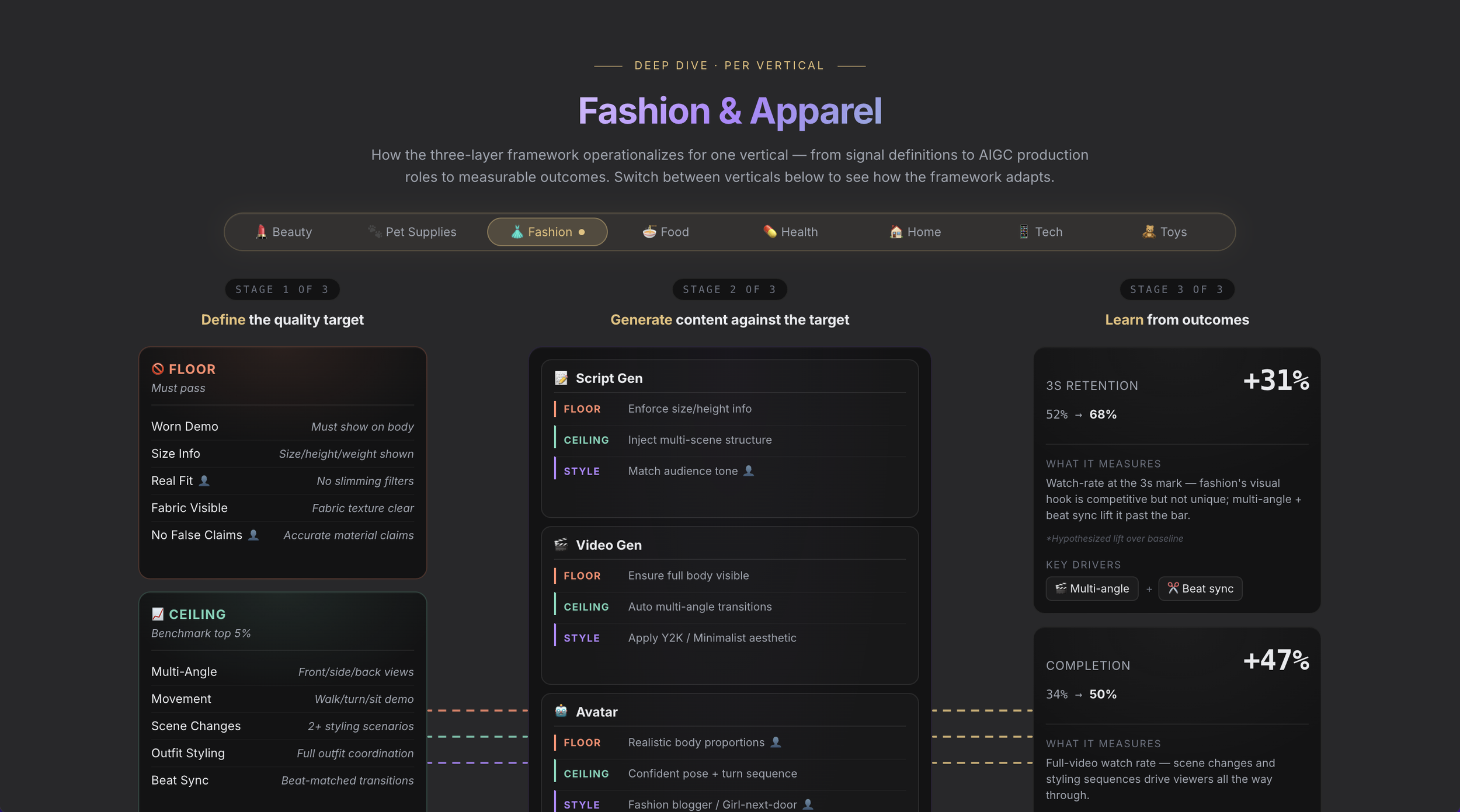

Part 2: Scaling the Framework Across Verticals, Markets, and Execution

Picks up where Part 1 left off and covers how the framework scales: eight verticals with their own Floor / Ceiling / Style configurations, three markets launching the same product under different regulatory regimes, and ten failure modes the framework can hit at scale.

Introduces AI Behavior Design as a practice, with four responsibilities, a 90-day plan to stand up the function, and a sample rubric criterion showing the depth of production rubric authoring.

The result is both a scaling playbook portable across AI commerce platforms, and an operational portrait of the design discipline that runs the eval and rubric layer between policy and ML engineering.

Part 3: Cross-Regional Adaptation. From Framework to Applied Concept

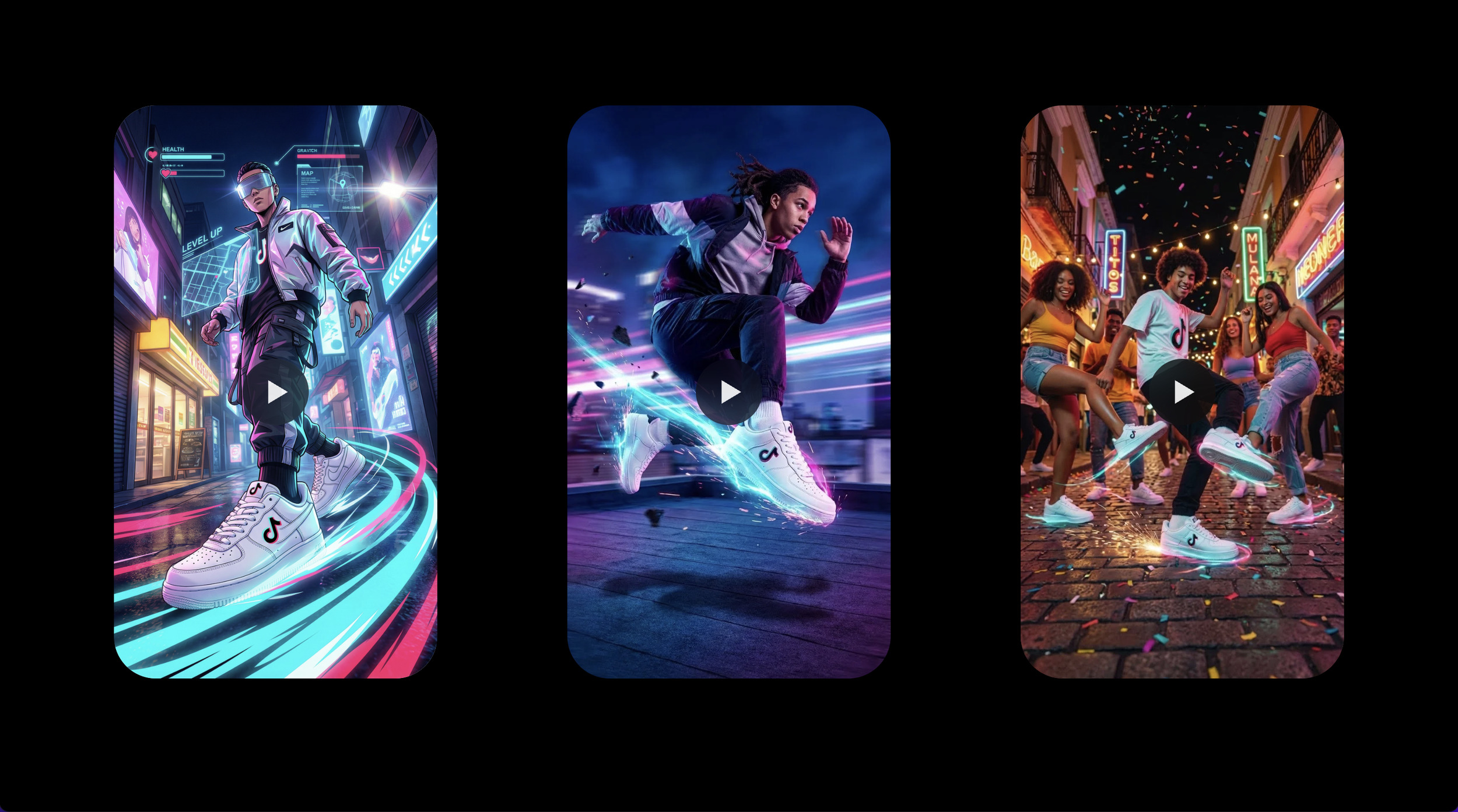

Walks through a 7-step seller journey using TikTok Shop AIGC: one sneaker product, three target markets (APAC, US/EU, LATAM), three regionally adapted video concepts ("Neon Pulse," "Hero Steps," "Rhythm Run"), and per-region quality scoring against Ceiling benchmarks.

Documents what current AIGC video tools can actually deliver. Four challenge tables cover technical limits (visual consistency, temporal stability, physics, brand safety), workflow constraints (asset dependencies, non-determinism), product solutions (control vs automation, editing as core value), and e-commerce regional issues (compliance, localization, UGC perception).

The result is both a UX flow for cross-regional video generation at scale, and an honest accounting of where AIGC tools currently fall short and how product design can compensate.

Part 4: Authenticity Through Imperfection. Making AI Content Feel Human

Builds a reusable framework that makes AI-generated TikTok content feel like authentic human creation rather than AI production. Diagnoses five failure modes (script, footage, performance, audio, editing) where AI content reads as too perfect, and constructs a three-layer prompt architecture (Style, Ceiling, Floor) that constrains AI generation toward imperfection.

Walks through a five-scene narrative template (pain point → failed solutions → discovery → proof → CTA) with full visual prompts, scripts, humanization rules, and regenerate conditions per scene. Includes a template library showing how the framework adapts across narrative formats (problem-solution, before/after, unboxing, review, GRWM).

The result is both a production-ready prompt architecture for AIGC trust signals, and a demonstration that authenticity is a framework problem, not a model problem.